Stories

Stories capture our projects, thoughts, reflections, and highlights. Read about our latest work.

Data & AI for Social Impact

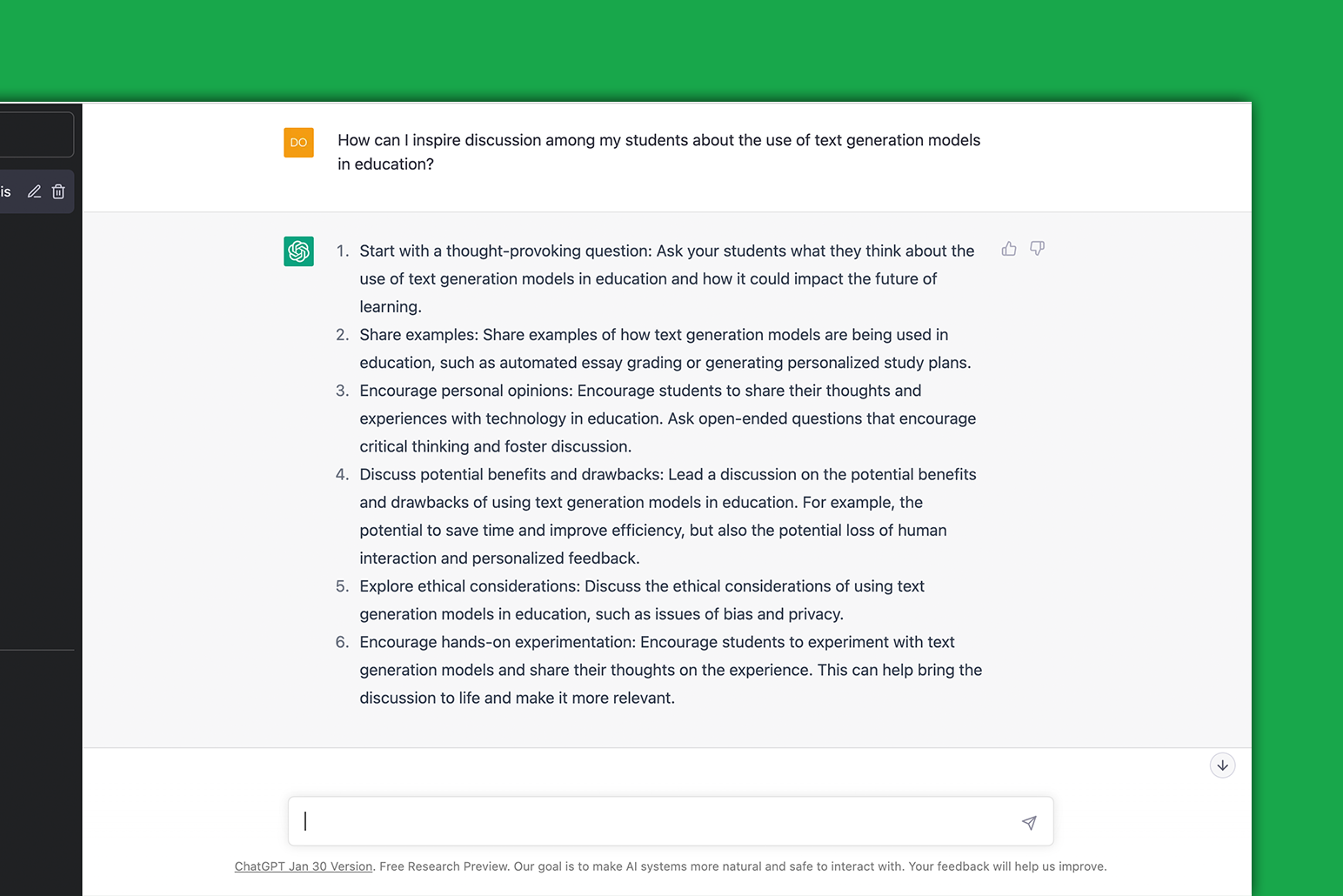

We identify and research trends and put data and AI algorithms to the test – all this with one question in mind: does this stuff actually work? We explore by doing, working with both completely new and more validated innovations.

The digital age creates an ever growing need for people to adjust to the rapid changing world around them. This comes with many challenges. At the Centre for Innovation we develop solutions in a responsible, transparent and above all meaningful way. We believe that technology can be built in a way that ensures privacy and improves equality and fairness. In a time of unprecedented access to data we try to put people first and bring the overwhelming aspect of technology back to a human scale. By doing this we want to help people to better understand the time and place we live in.

Publications

Counterfactual explanations: Can you get to the “ground truth” of black box decision making?

Insights, Projects

My Data 2020: Building Inclusive Smart Cities

Insights

Data-Driven Public Private Partnerships: 3 Areas of Risk for Public Organisations to Understand

Insights

Remote Teaching: Choosing Your Tools

Insights, Projects, Publications

Report: Secure Communication Platforms

Insights

Personal Data in Remote Teaching

Insights

Get Ahead with Data Privacy in Crisis Planning

Projects

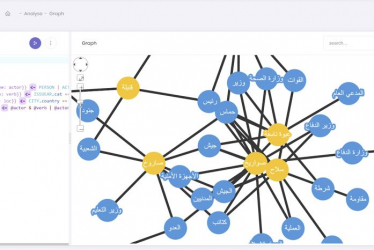

Using Social Network Analysis to Explore International Criminal Court Cases

Projects

Investigating Mass Grave Locations with Deep Learning

Projects

Using Facial Recognition in a Sensitive Context

Insights

The Holistic Data Responsibility Framework

Insights

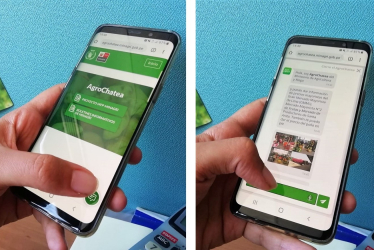

AgroChatea: helping Peruvian farmers make better deals

Insights

GDPR: Challenges and Opportunities in Innovation

Publications

Using data to analyse WFP’s digital cash programme in Lebanon

Insights

Genetic Privacy: Interview with Robert Zwijnenberg

Projects

ChitChat: A Chatbot to support WFP

Projects

ChitChat: Radio Dabanga, Free Press Unlimited

Projects

CFI Chatbot to support humanitarian organisations

Insights

Capturing the news from 2,000 daily messages from Sudan

Projects

Data-informed programming in combating AIDS

Insights

Algorithms in search of missing persons